Visual Reasoning AI: Teaching PTZOptics Cameras to Understand What They See

Your PTZ camera captures thousands of frames per hour. Until now, not a single one of them was understood.

A PTZOptics camera is a remarkable piece of engineering. It pans, tilts, zooms, recalls presets, adjusts color, streams over NDI and SRT — all controllable through a well-documented HTTP API. But for all of that capability, the camera has always been fundamentally passive. It captures what’s in front of it. It has no idea what that is.

Visual reasoning changes that.What Is Visual Reasoning?

Visual reasoning is the ability for a system to look at an image and answer questions about it in natural language. Not pattern matching against a pre-trained library of objects. Not face-only detection. Actual comprehension.

Point a PTZOptics camera at a conference stage and ask: “Is someone at the podium?” The system answers yes or no. Ask “How many people are seated in the front row?” and you get a number. Ask “Find the person in the red jacket” and you get coordinates — x, y, width, height — precise enough to drive a pan/tilt command.

The technology making this possible is called a Vision Language Model, or VLM. It works the way large language models like ChatGPT work with text, except it processes images and text together. You send it a camera frame and a question. It sends back an answer. The model we use is Moondream, a VLM built specifically for fast, real-time visual reasoning.

The important part for PTZOptics users: you describe what you want in English. The AI handles the rest.

Why This Matters for PTZ Cameras Specifically

PTZ cameras have always had the mechanical capability to do remarkable things. Pan to any angle. Tilt with sub-degree precision. Zoom from wide to tight in a second. Recall presets instantly. The HTTP API exposes all of it.

What PTZ cameras have lacked is the intelligence to decide when to do those things.

Auto-tracking exists, of course. But traditional auto-tracking is built on conventional computer vision — it follows faces, or detects motion, or uses infrared markers. Each approach has hard limits:

| Traditional Approach | Limitation |

| Face tracking | Can’t follow a person who turns around. Can’t track a non-face object at all. |

| Motion detection | Follows anything that moves. Can’t distinguish between the presenter and an audience member standing up. |

| Marker-based | Requires physical hardware on the subject. Doesn’t scale. |

| Trained object detection | Only tracks objects the model was trained on. New object = new training cycle. |

Visual reasoning removes all of these constraints. Because the VLM understands language, you can track anything you can describe:

- “Person at the podium”

- “Presenter holding the microphone”

- “The white drone on the table”

- “Hand gestures”

- “The scoreboard”

No retraining. No markers. No limitation to faces. Just a text description and a camera feed.

How It Works With PTZOptics Cameras

The architecture is straightforward. A browser-based application captures frames from the camera’s video output, sends them to the Moondream API with a prompt, receives detection coordinates, and translates those coordinates into PTZOptics HTTP API commands.

- PTZOptics Camera (video feed)

- Browser captures frame

- Moondream API: “Find the speaker”

- Response: Bounding box [x: 0.35, y: 0.20, w: 0.30, h: 0.60]

- Calculate: Subject is left of center by 15%

- PTZOptics API: /cgi-bin/ptzctrl.cgi?ptzcmd&left&3&3

- Camera pans left. Subject centered. Repeat.

The loop runs every 500 milliseconds. The camera makes small corrections continuously, resulting in smooth, natural tracking. A deadzone prevents jitter — if the subject is close enough to center, the camera holds steady.

This runs in a browser tab. The PTZOptics camera doesn’t need new firmware, a software update, or any hardware modification. If your camera has an HTTP API and a video output you can view in a browser, it works today.Five Things Your PTZOptics Camera Can Do Now

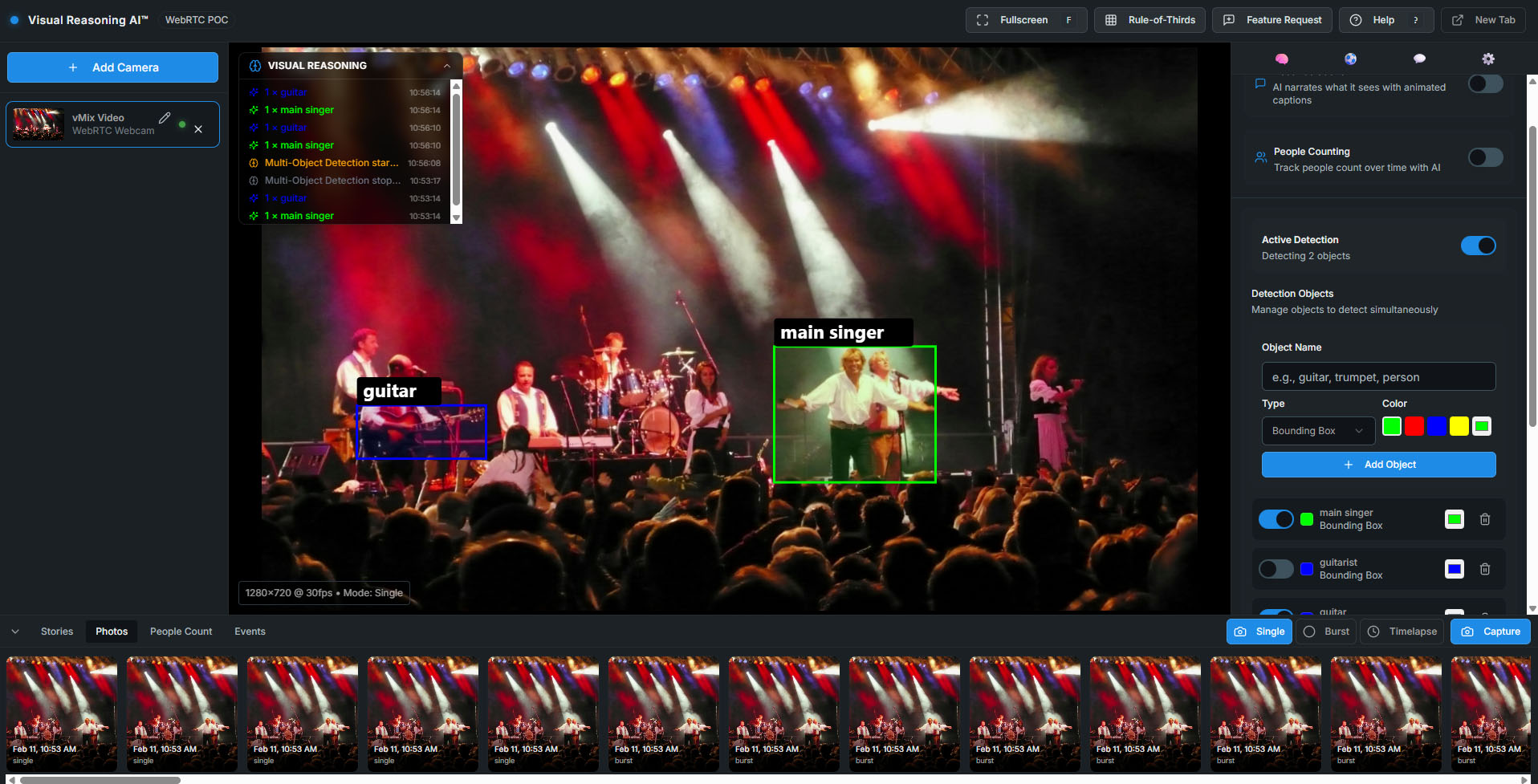

We built 17 open-source tools in the Visual Reasoning Playground to demonstrate what’s possible. Several are designed specifically around PTZOptics camera capabilities.1. Track Any Object by Description

The PTZ Auto-Tracker is the flagship tool. Type a description, click start, and the camera follows. It supports multiple operation styles — smooth tracking for broadcast, precise centering for presentations, fast response for sports — each tuning the detection rate, movement speed, and deadzone automatically.

It works with HTTP authentication, so cameras with access control enabled are fully supported. All PTZOptics models with IP control are compatible.

Try it: PTZOptics Moondream Tracker

AI-Powered Framing Suggestions

The Framing Assistant analyzes your camera’s current view and recommends composition adjustments. “Move camera up 5 degrees and zoom in 10% for better framing of the seated subject.” It combines Moondream’s scene understanding with PTZOptics pan/tilt/zoom control to offer — and execute — real-time framing improvements.

For operators who are still learning shot composition, or for automated systems that need to frame shots without human input, this closes a gap that no amount of mechanical precision could solve alone.

Try it: Framing Assistant

Intelligent Color Tuning

The PTZ Color Tuner captures a frame, asks the AI to analyze the scene’s color characteristics — overall tone, exposure, white balance, dominant colors — and generates specific adjustment recommendations. Then it applies those adjustments directly through the PTZOptics image parameter API.

| Action | Example Recommendation | PTZOptics API Command |

| Analyze | “Scene has warm tungsten lighting. Indoor white balance recommended. Reduce saturation by 1 stop.” | |

| Apply | post_image_value&wbmode&1 (indoor) | |

| Apply | post_image_value&saturation&6 (reduce by 1) |

Brightness, contrast, saturation, sharpness, and white balance mode — all controllable through the API, all adjustable based on what the AI actually sees in the scene. For multi-camera setups where color matching matters, this turns a tedious manual process into a one-click operation.

Try it: PTZ Color Tuner

Color Matching Across Cameras

Related to the Color Tuner but solving a different problem: the Color Matcher lets you upload a reference image — the look you want — and then analyzes your live camera output against it. The AI compares color temperature, saturation, contrast, brightness, and overall mood, then tells you exactly what to adjust to match.

For productions running three or four PTZOptics cameras that need a consistent look, this replaces the eyeball-and-guess workflow with AI-driven analysis.

Try it: Color Matcher

MediaPipe vs. Moondream Tracking Comparison

For the engineers and integrators evaluating their options, the Tracking Comparison tool runs Google’s MediaPipe (local, ~10ms latency, face/hand/pose only) alongside Moondream (cloud, ~200ms latency, tracks anything by description) on the same camera feed simultaneously.

You see the tradeoffs in real time. MediaPipe is faster but limited to what it was trained on. Moondream is slower but can track anything you describe. For some PTZOptics deployments, speed matters most. For others, flexibility wins. This tool helps you make that decision with data instead of guesswork.

Try it: Tracking Comparison

What This Means for PTZOptics Integrators and Operators

The PTZOptics HTTP API was designed to be open and accessible. That design decision is paying off in ways that would have been hard to predict even two years ago. Every PTZOptics camera sold in the last several years already has the API built in. No upgrade required. No additional licensing.

What visual reasoning adds is the intelligence layer on top. The API provides the how — how to move the camera, how to adjust the image, how to recall a preset. Visual reasoning provides the when and the why — when to move because the subject shifted, why to adjust color because the lighting changed.

For integrators, this opens a new category of solutions:

- Automated production in houses of worship.

- Intelligent camera coverage in conference rooms.

- Unattended tracking in lecture halls.

Every installation where a PTZOptics camera sits on a mount and someone has to operate it is a candidate for visual reasoning automation.

For operators, it’s not about replacement. It’s about offloading the mechanical work — keeping a subject centered, maintaining consistent color, following a speaker across a stage — so you can focus on the creative decisions that actually require human judgment.Get Started

Everything is open source and available now.

- Live demo (no install): streamgeeks.github.io/visual-reasoning-playground

- Source code: github.com/streamgeeks/visual-reasoning-playground

- The book: Visual Reasoning AI for Broadcast and ProAV by Paul Richards — the complete guide to building these systems, from first concepts to production deployment.

All you need is a PTZOptics camera on your network, a free Moondream API key, and a browser. Your camera already has the API. Now it has the intelligence to use it.—–Paul Richards is CRO at PTZOptics and Chief Streaming Officer at StreamGeeks. He has authored more than 10 books on audiovisual and live streaming technology. The Visual Reasoning Playground is open source under the MIT license. For PTZOptics API documentation, visit docs.ptzoptics.com.